2026-03-20

AI Translation Security and Data Privacy: What to Check Before Choosing a Provider

Your team wants to use AI translation. But Legal wants to know where the data goes. IT needs to confirm it meets your security policy. Meanwhile, Procurement is asking about GDPR and the EU AI Act. How do you handle their requirements?

This article gives you a structured checklist for evaluating AI translation providers on security and data privacy - and a set of signals that distinguish enterprise-ready platforms from general-purpose tools that were not designed for business use.

Before adopting any AI translation platform for business use, verify five things:

-

That your data is not used to train the public model.

-

That GDPR compliance is confirmed in writing.

-

That the platform supports SSO and audit logs.

-

That a data processing agreement is available.

-

That the vendor can explain how confidential content is handled in managed delivery.

If a vendor cannot answer these clearly before the pilot, that is your answer.

Why AI Translation Creates Specific Security Risks

Most AI translation tools that teams adopt informally - browser-based, general-purpose - were built for consumer or light professional use.

When an employee pastes a confidential contract, unreleased product specs, or a patient record into one of these tools, there is rarely a formal data agreement in place, and the content may be processed or used to improve the underlying model.

The risk is not theoretical. Since 2023, many large organizations have reported incidents involving employees sharing sensitive data through public AI tools without realizing the implications.

Post-EU AI Act, regulators are treating AI-assisted data processing as a compliance area that requires the same controls as other enterprise software.

The evaluation question is not "is AI translation safe?" It is: "does this specific platform handle data with the controls our program requires?"

The Compliance Checklist: What to Verify Before Any Pilot

Use this checklist in your vendor evaluation. Request documentation for each item - do not accept verbal assurances.

- Your content is not used to train the underlying AI model

- Clear data processing agreement (DPA) available before pilot

- Data hosting location is defined - with residency options if required

Regulatory Compliance

-

Your content is not used to train the underlying AI model

-

Clear data processing agreement (DPA) available before pilot

-

Data hosting location is defined - with residency options if required

Enterprise Security Controls

- Single sign-on (SSO) available on enterprise plans

- Audit logs: Who translated what, when, with which output and review outcome

- Role-based access controls for team and project management

- API access available with authentication and access management

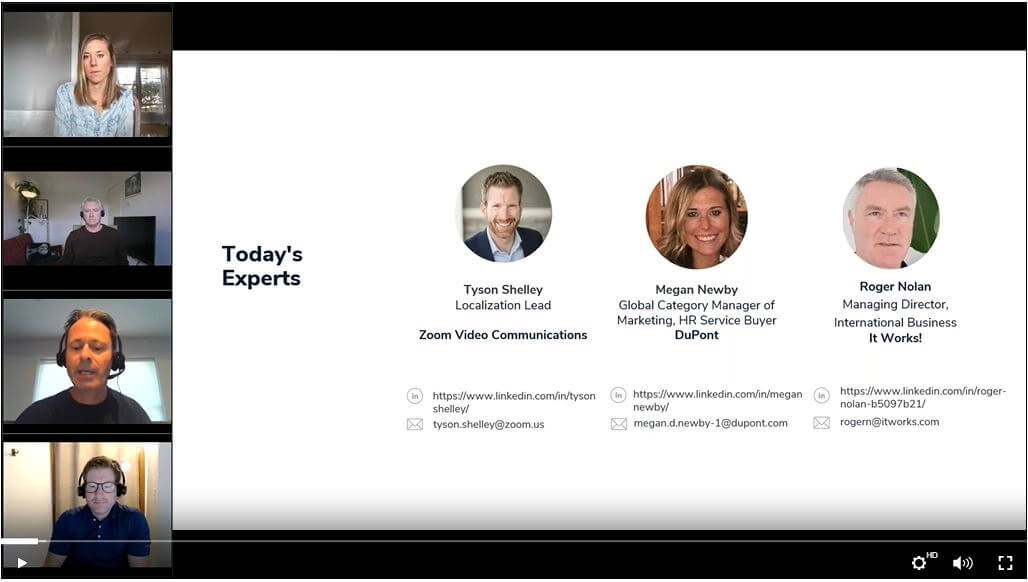

Managed Delivery Services (Like Lia Services)

- Dedicated environment for sensitive or regulated programs

- Named linguist accountability with documented review criteria

- Full traceability from source content to final translated output

- Custom SLA including security and confidentiality terms

What to Watch for in Vendor Evaluations

- Vague claims like "we take security seriously" or "enterprise-grade" without supporting documentation

- No clear answer on whether your data is used for public model training - silence on this point is a red flag

- SSO, audit logs, and DPA available only at the highest tier or behind a separate negotiation

- No documented process for how expert linguists handle confidential content in managed delivery programs

How Enterprise-Grade Platforms Handle This Differently

General-purpose AI tools are designed for volume and accessibility. Enterprise AI translation platforms are designed for governance. The difference shows up in how data controls are structured - not as optional add-ons, but as part of the core product architecture.

With Lia, data protection is not a tier upgrade. Your content is excluded from model training by default. GDPR compliance and data processing agreements are available before any pilot. Enterprise plan features - SSO, audit logs, dedicated CSM, API access - are defined and documented, not negotiated case by case.

For programs involving regulated content, Lia Services adds a dedicated environment, contractual SLA, named linguist accountability, and full audit traceability. The governance framework is not abstract - it is operationalized in the delivery structure.

Key Takeaways

-

The biggest security risk with AI translation is using a general-purpose tool that processes confidential data without a formal agreement or data controls.

-

Data training exclusion, GDPR compliance, and a DPA should be confirmed before any pilot involving real business content.

-

Enterprise security features - SSO, audit logs, API access - are necessary for programs with multiple users, teams, or regulated content.

-

Post-EU AI Act, procurement teams are asking these questions earlier in the evaluation cycle. Having your answers ready accelerates the process.

-

Use the checklist above in your vendor review. Ask for documentation, not assurances.

Evaluating Lia for Your Security and Compliance Requirements?

We can provide our standard security documentation and walk you through how Lia handles data protection, GDPR compliance, and enterprise security controls. For regulated programs, we can scope a Lia Services engagement with a contractual SLA and dedicated governance.

.jpg)

%20(1).jpg)

.jpg)