Up to 40% cost savings with smart tech

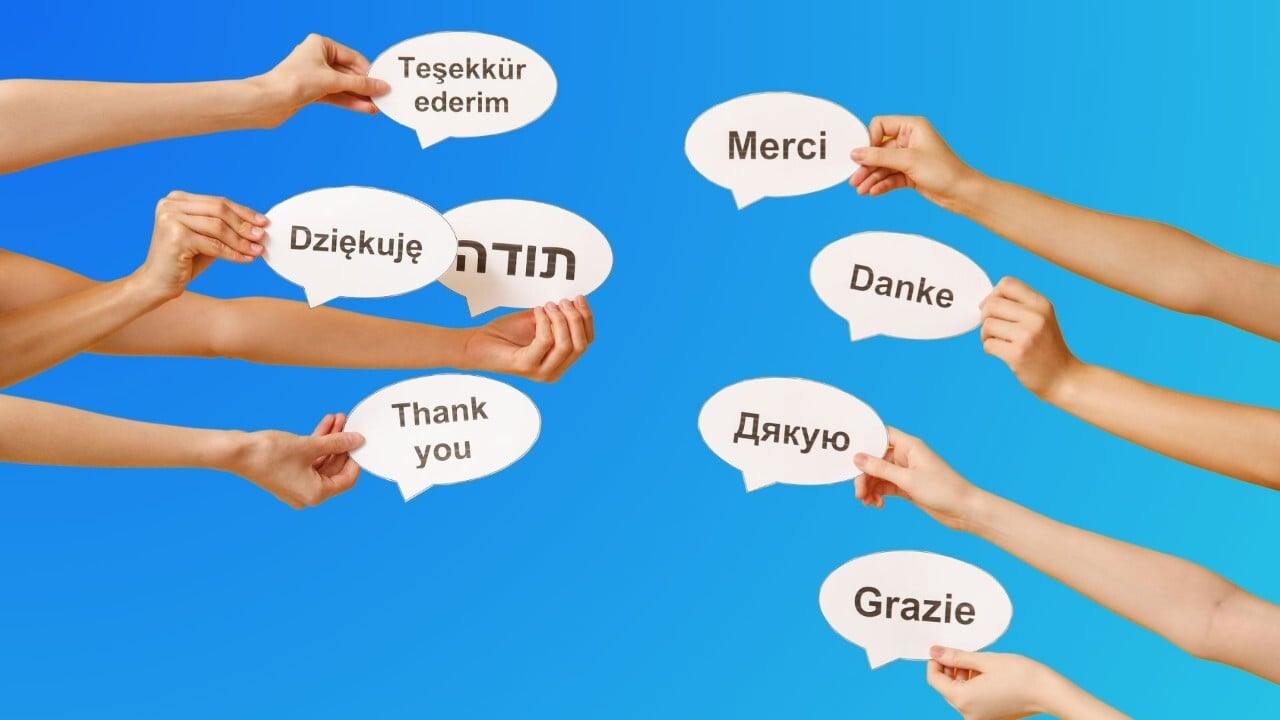

Enterprise Translation Services

AI-powered precision. Human-verified accuracy. Enterprise-grade compliance and security trusted worldwide.

100% ISO-certified and globally compliant

98% client satisfaction across all industries

Your Global Partner for Translation, Content & Localization

Why Enterprises Choose Acolad Translation Services

AI + Human Expertise

For secure, consistent multilingual content.

Scalable Delivery

Any volume, any language, any deadline.

Certified Quality

Adherence to standards like ISO 17100 for guaranteed precision.

Measurable ROI

Proving translation’s business value.

Seamless Connectivity

Centralized content and automated processes.

Regulatory Industry Expertise

Specialized linguists for Life Sciences, Legal, and Finance.

Translation Services & Technology for All Content Types

Smart

Fast AI translation with automated optimization for clarity and tone.

| Details |

|---|

| IDEAL FOR Internal and high-volume content where speed matters. |

|

WORKFLOW AI Translation Post-editing Quality Estimation |

Pro

AI translation refined by a native linguist for fluency, accuracy, and tone consistency.

| Details |

|---|

| IDEAL FOR Customer-facing materials, marketing copy, product content. |

|

WORKFLOW AI Translation Post-editing Quality Estimation Human Review |

Expert

AI translation reviewed by an expert linguist with domain-specific expertise.

| Details |

|---|

| IDEAL FOR Technical, regulated, or high-stakes content (legal, life sciences, financial). |

|

WORKFLOW AI Translation Post-editing Quality Estimation Specialist Review |

Creative

Fully human adaptation, preserving intent, emotion, and cultural nuance.

| Details |

|---|

| IDEAL FOR Campaigns, branding, creative storytelling. |

|

WORKFLOW Human Transcreation |

Lia Services: Enterprise Access to AI and Human Expertise

Simplify global content at scale with Lia — our AI-powered platform.

- Enterprise gateway with expert consulting & managed services

- Combines AI speed with human precision

- Built for complex, high-stakes content needs

Translation Expertise Where, When, and How You Need It

Translate manuals, product specifications, patents, and knowledge bases with validation from subject-matter experts and in-context quality assurance.

Guarantee audit-ready accuracy for regulatory filings, audits, and tenders through Certified Translation Services recognized for legal and compliance requirements.

Tailor your digital presence to resonate with diverse audiences across different regions, ensuring cultural relevance, improved SEO performance, and consistent brand identity.

Ensure your Clinical Outcome Assessment (COA) translations are linguistically accurate and culturally adapted to guarantee the successful deployment of your clinical trials globally.

Translation Optimization

Expert review and intelligent language quality tools to ensure your multilingual content is accurate, consistent, and compliant, regardless of volume.

We help you find, select, and integrate the best technology to streamline processes, centralize resources, and automate tasks.

From off-the-shelf solutions to tailor-made APIs and connectors, we empower your content management systems with multilingual capabilities.

Enterprise Results You Can Measure

+40 Markets expansion

Ponsse, a Finnish exporter, grew across 40+ markets, including China and USA.

50% Cost Savings

Tetra Pak saved over 50% on translation costs while in time-to-market.

2x More quality

Clearblue owner, Swiss Precision Diagnostics, improved quality while cutting costs.

“Working with Acolad’s team to centralize our translation program has allowed us to put ‘Quality First’ in every aspect of our company. Our teams cannot thank you enough.”

“We chose Acolad to take over our complete terminology translation – for more than 30 languages. After 2 years, we cut translation costs by more than 50%.”

Secure Translation. Compliant Content.

ISO 17100:2015

ISO/IEC 27001:2022

GDPR Compliance

Governance & Traceability

Ramping Up for a New Global Translation Project?

Contact us today to discuss your language requirements.

Related Resources

New to translation and localization? We have answers.

How much do translation services cost?

How much do translation services cost?

Our pricing is tailored to your project’s specific needs. Factors like language pair, content type, word count, subject matter, and turnaround time all influence cost. We provide clear, upfront quotes with no hidden fees. By combining AI automation with certified human expertise, we help optimize costs while ensuring high-quality, consistent results. For ongoing needs, we also offer volume discounts and customized service models to maximize ROI.

How do you ensure translation quality and accuracy?

How do you ensure translation quality and accuracy?

We guarantee superior quality through a multi-layered approach combining technology and expertise. Our workflows are 100% ISO-certified (ISO 17100:2015) for consistency and full compliance in regulated industries. We leverage intelligent AI tools alongside dedicated project teams and expert linguists who review, adapt, and quality-check translations for accuracy, context, and compliance - a guarantee that standalone AI cannot offer.

How secure are your translation services?

How secure are your translation services?

Your data and content are protected by the highest enterprise-grade standards. We maintain ISO/IEC 27001:2022 certified information security management and ensure full GDPR Compliance with encryption and access control. We also operate with an NDA-by-default policy and provide full governance and traceability to support audits and compliance requirements.

What’s the difference between AI translation and human translation?

What’s the difference between AI translation and human translation?

Acolad offers three models: AI Translation for speed, Human-Centered Translation for maximum precision, and a Hybrid approach for cost savings. Our core difference is combining AI speed with certified human expertise. Our certified linguists review, adapt, and quality-check every translation, providing the accuracy, context, and compliance essential for complex global content.

What industries does Acolad serve?

What industries does Acolad serve?

Acolad serves global organizations across all industries. We have extensive expertise in highly regulated sectors such as Life Sciences, Legal, Manufacturing, and Public Services. Our ability to utilize ISO-certified processes and industry-specialized linguists ensures accuracy and full regulatory alignment for high-stakes content like clinical trials and financial reporting.

How can I get a quote or start a project?

How can I get a quote or start a project?

Starting a project is simple. You can contact us today to discuss your specific language requirements. We offer seamless integrations with leading CMS, PIM, DAM, and marketing automation systems through our API-first approach. This allows you to centralize content and automate processes, reduce manual file handling, and speed up delivery. For critical, large-scale projects, we set up dedicated project teams, AI-powered pipelines, and round-the-clock workflows to meet the tightest deadlines.

.jpg)

%20(1).jpg)