2026-04-02

How Does AI Dubbing Work? And What It Means for Your Enterprise Video Strategy

Most enterprise teams producing multilingual video face the same constraint: localizing every asset with traditional dubbing is slow, expensive, and hard to scale. AI dubbing changes that equation for the majority of corporate content. Before deciding whether it fits your program, it helps to understand what the technology actually does and what that means in practice.

What Is AI Dubbing?

AI dubbing is the process of converting a video's spoken audio into another language using artificial intelligence, without studio sessions or voice actor scheduling. It chains three technologies in sequence: speech-to-text, machine translation, and voice synthesis. The result is a localized audio track that can be produced at a fraction of the cost of traditional dubbing, with significantly shorter turnaround times.

How AI Dubbing Works: Speech-to-Text, Machine Translation and Voice Synthesis

AI dubbing workflows usually run the same three steps in sequence.

Speech-to-text transcribes the original spoken audio into written text. This is the most critical stage: any error here, a misheard word or a missed term, carries through everything that follows and is harder to catch once the audio is generated. According to the Slator AI Dubbing Report 2025, errors introduced at the transcription stage propagate through the entire pipeline, making upstream accuracy the primary quality lever.

Machine translation converts that text into the target language. For enterprise content with brand terminology, product names, or regulated language, a human review of the translation before the next step is the standard way to prevent errors from reaching the final audio.

Voice synthesis converts the translated text into spoken audio. The system draws from a voice library, clones the original speaker's voice, or generates a new AI voice. Quality varies by language pair, which is an important consideration that matters when selecting a partner for content that reaches external audiences.

Understanding the chain matters for one practical reason: quality depends on each step, not just the final output. A provider who only checks the end result is harder to work with than one who builds review into the process at each stage.

AI Dubbing Benefits for Enterprise Teams: Speed, Scale, and Cost

The most direct business impact is scale. A library of training modules, a product video series, or a set of market communications that would take months to localize through traditional dubbing can move through an AI dubbing pipeline significantly faster. For organizations that need to reach employees, customers, or partners across multiple markets at the same time, that speed advantage is material.

Cost is the second driver. Buyers interviewed for the Slator AI Dubbing Report 2025 reported rates up to 80% lower than traditional dubbing. That reduction does not mean compromised quality for most corporate content - it means that assets which were previously too expensive to localize at all become viable. The practical effect is not just cheaper localization of existing assets, but access to markets and audiences that were simply out of reach before.

Elearning and training content, product and marketing videos, and internal communications are the corporate use cases with the highest AI dubbing adoption. These share a common feature: they are typically narrated with the speaker off-screen, which is the configuration where AI dubbing delivers the strongest output. For a broader view of what multilingual video localization covers beyond dubbing, see Acolad's multimedia localization services.

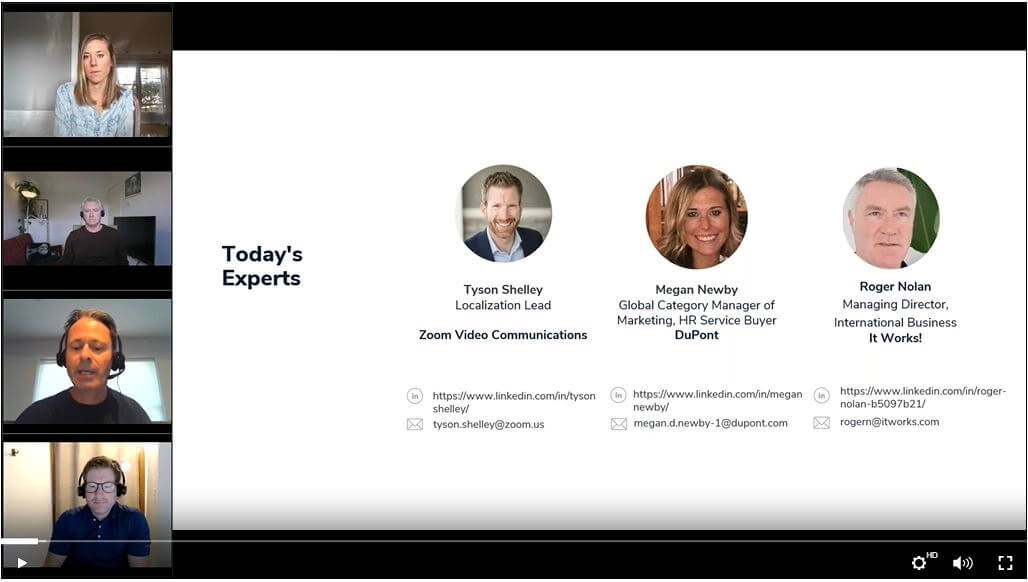

When to Use AI Dubbing: Human Review, Lip Sync, and Content Fit

Fully automated output works well for internal content with limited distribution and low reputational risk: onboarding videos, internal briefings, process updates. For anything reaching customers, partners, or regulators, a human review pass is standard practice. A Localization Lead at a major TV broadcaster told Slator in 2025: "Quality checks are still required, and not just a spot check. You need a full runtime quality check." A reviewer catches mistranslations of technical or brand terms, unnatural pauses, and voice inconsistencies that would be noticeable to a native speaker.

The cost of that review is a fraction of the overall saving generated by the automation. The model that works is not a choice between AI or human. It is AI for speed and scale, human expertise for quality control on the content that matters.

AI dubbing is distinct from voiceover, which does not consider on-screen lip movements as part of the output. If your content features visible speakers, voiceover and dubbing serve different purposes and the right approach depends on the content format and audience expectations. Lip sync, which aligns audio timing with the speaker's visible mouth movements, is available but adds cost and complexity that is rarely justified outside high-visibility brand content.

Key Takeaways

-

Quality depends on every step in the chain. The transcription stage is the most critical: errors there propagate through the entire workflow (Slator AI Dubbing Report 2025).

-

Buyers report cost reductions of up to 80% compared to traditional dubbing, making previously unviable content localization possible (Slator AI Dubbing Report 2025).

-

Elearning, training content, product videos, and internal communications are the strongest enterprise fits. Off-screen narration produces the cleanest output.

-

Human review is standard for external-facing content. A full runtime quality check remains necessary before any external publication.

-

AI dubbing and voiceover are not the same. Understanding the difference helps select the right approach for each content type.

.jpg)

%20(1).jpg)

.jpg)